Thursday, November 11, 2010

System Test Team Reports Available on developerWorks

For example, in a recent report, "Leverage Business Level Applications (BLA) to Improve Management and Operational Capabilities Over Stand-alone Java EE Applications", Feng Yue Li from the WebSphere system test team describes a test scenario that focused on the use of shared libraries within BLAs. She explains how she verified that shared library relationships between BLA composition units work properly when a new version of the BLA asset is rolled out and describes how command assistance can be used with BLAs.

In another recent report, "Test Infrastructure: OSGi FeP and JEE applications", tester Tam Dinh outlines a scenario in which the WebSphere system team deployed, exercised and stressed WebSphere Application Server V7.0 OSGi Feature Pack using three OSGi applications and three JEE applications in a WebSphere Application Server Network Deployment cell.

We'd like to hear your feedback about our work and your ideas about how our testing can be improved. Visit our developerWorks test team wiki and let us know what you think.

Friday, October 8, 2010

New: WebSphere Batch Feature Pack !

This new feature pack provides support for a Java Batch programming model, offers tools and operational controls for Batch workload execution, enables development and deployment of batch applications, and allows concurrent execution of batch and OLTP workloads.

The Batch Feature Pack is targeted toward developers and basic production deployment. It delivers a subset of the functionality of IBM WebSphere Extended Deployment Compute Grid. Batch applications built using the feature pack are fully upward compatible with the Compute Grid environment.

The Compute Grid product offers advanced features, including support for parallel processing, workload scheduler integration, usage accounting, and more. You can start with the Feature Pack (FeP) for Modern Batch and grow it into a full Compute Grid environment. The Batch Deployment Options Chart outlines the functional continuum among these offerings.

Tuesday, October 5, 2010

Joint WAS XML Feature Pack and DB2 pureXML Article on FpML Lives

Programming XML across the multiple tiers, Part 2: Write efficient Java EE applications that exploit an XML database server

This article uses the example of Financial Products Markup Language (FpML) to show how to program realistic native XML across the Application Server and DB2 pureXML. It shows how you can use one consistent programming model (XQuery) and one consistent data model (XML) across data access, transformation, and filtering across both the mid tier and database tier. Using this one data model which doesn't require mapping to Java objects should increase the agility of your XML centric applications as no mapping code needs to be generated or maintained across both tiers.

Even though the article is based upon FpML (which is really useful to the financial sector), the concept is applicable to all industries that have substantial amounts of XML data.

The article has code attached (both a Rational Application Developer ear project and Eclipse/ANT builds) and database load scripts, so you can play with the code to see how it works. All you need to do is define the JDBC resources to point to your DB2 instance. I also have a virtual image for this based upon the free of charge WebSphere Application Server For Developers and DB2 Express-C in case you're interested.

Monday, September 27, 2010

WebSphere V8.0 Beta Feature Focus Weeks

IBM WebSphere Application Server V8.0 Beta Forums

Many include demos and extensive information on the features.

Some topics that recently were discussed include XML Application Programming, SIP Servlets, Communication Enabled Applications, Installation on z/OS, JSP 2.2, JAX-RS, Java EE6 Web Services, EJB 3.1, JPA 2.0, Security Enhancements, and Servlet 3.0.

Many other topics have been discussed as well. Just scroll back through the thread to find and comment on many of the new useful features coming in version 8.0.

Friday, August 13, 2010

A view from the road

Last week I was at Balisage 2010 talking about Web 2.0 and XML discussing how to introduce MVC frameworks into DOJO (and other Web 2.0 widget libraries) that provides all sorts of interesting value add to DOJO. Also once MVC is in place, XML centric models integrate better into the browser. Specifically I discussed Ubiquity XForms. The goal of this work would be a clean XML story of storage to mid tier joins and query that exposed REST/XML in its original form to the browser. This avoids having to write XML to POJO to JSON conversion routines for data that originates and is stored in XML - a common case in many clients I talk to.

This week, I've been between New York and New Jersey. I've been hearing about how the financial and insurance industries work with XML. I've heard about how enterprise content management systems and data storage systems are closely related. I've heard about how XQuery as a general purpose programming model on top of such data is being used. However, I've heard of challenges that relate in linking systems together. I've also heard that having "hybrid servers" that allow XML to bridge into relational and Java systems and JSON is important. Finally, I built a nice VMware based demo of FpML processing across DB2 pureXML and the WebSphere XML Feature Pack. If you're interested in seeing how to efficiently program native XML end to end (whether you're into FpML or not), let me know and we can arrange a demo.

Friday, July 9, 2010

RAD Tooling for OSGi Applications

Built on top of the latest version of Eclipse (Helios), the RAD V8 Beta provides OSGi tools for enterprise Java developers. While most of the development activities, and hence development tools, for enterprise OSGi applications are common with Java EE there are some new considerations. Primarily, these are around the compile-time classpaths, the authoring of new metadata (the OSGi bundle and application manifests) and, optionally, Blueprint bean definition files. RAD introduces new project types for OSGi Bundle projects and OSGi application projects and a variety of new editors and tools for developing components in these types of projects. OSGi modularity semantics are honored in the project build paths to actively encourage modular design: each project has the scope of a single bundle, with Java package visibility between projects restricted to those packages explicitly imported/exported by the project's bundle manifest. This supports the careful and deliberate exposure of only those Java packages that are intended to be used external to a project . RAD's facet-based configuration enables OSGi projects to be configured as OSGi Web projects or OSGi JPA projects and integrates tools for authoring web.xml, persistence.xml and blueprint.xml. OSGi application projects - representing a complete enterprise application consisting of multiple bundles - can be imported from or exported to enterprise bundle archives, and can be tested and debugged on WAS V7 from the RAD workspace. RAD's SCA development tools are also extended to support "OSGi Applications" as a new implementation type for SCA components. The SCA tools accelerate the assembly of SCA composites; these can include OSGi application components wh

ich may selectively expose OSGi services outside the OSGi application and define remote bindings for these services. There's more about the relationship between OSGi services and SCA services here.

ich may selectively expose OSGi services outside the OSGi application and define remote bindings for these services. There's more about the relationship between OSGi services and SCA services here.One of the quickest ways to explore the new OSGi features in RAD is to import one of the sample applications provided with the WAS V7 feature pack into a RAD workspace. The feature pack's Blog Sample, was described in an earlier post so I'll use that as my example. Start RAD and run the File->Import->OSGi Application (EBA) wizard, enterng the location of the com.ibm.ws.eba.example.blog.eba file (found in the WAS_INSTALL/feature_packs/aries/installableApps directory). Since one of the features this sample is designed to show is the deployment of an application which includes content provisioned from a bundle repository, you also need to make the com.ibm.json.java_1.0.0.jar available in the workspace - which you can do through File->Import->OSGi Bundle. The result is 4 OSGi bundle projects and an OSGi application project in the workspace, with everything correctly resolved.

You can see the

relationships between the bundles in this application using RAD's new Bundle Explorer. The figure on the left shows the Bundle Explorer view of the Blog sample application project. Click on the image to enlarge it.

relationships between the bundles in this application using RAD's new Bundle Explorer. The figure on the left shows the Bundle Explorer view of the Blog sample application project. Click on the image to enlarge it.RAD gives you all the syntax assist, refactoring support, and problem quick-fixes you expect as well as new editors for bundle manifests, application manifests and blueprint bean definitions. By encapsulating business logic in POJO Blueprint beans and accessing persistent data through JPA entities, unit testing can be performed with simple frameworks like JUnit without requiring an application server to be running. And, of course, RAD includes integrated support for running and debugging entire OSGi applications on a WAS server, straight from the workspace.

WebSphere Application Server V8.0 Beta

There is a download for Windows, Linux, AIX, z/OS, Linux for System Z, HP-UX, and Solaris.

You can find out about the features offered in the beta via the following links:

- Overall "What's New"

- What's new for Installers which includes a new unified installer and customization tools

- What's new for Developers with new servlet, EJB 3.1 JAXB/JAX-WS, JAX-RS, XML centric programming (XPath2/XSLT2/XQuery), SAML support as well as auto-deploy of apps in a monitored directory

- What's new for Administrators and Performance adding web service management support, high performance logging, and enhanced caching under JPA

- What's new for Security which adds security hardening

- What's new for Problem Determination

There are far too many features to list here on the blog, so hopefully the above gives you enough reason to give it a spin. See the InfoCenter for further information.

Go ahead, download the beta, try it out and leave feedback on the forum.

Friday, June 25, 2010

Humor for Friday - Java-4-ever

Watch the Java 4-Ever Video

Now on YouTube

Thursday, June 3, 2010

OSGi applications and JPA2 feature pack has gone GA

- Support for development and deployment of enterprise applications using OSGi

- Support for Enterprise OSGi specs around Web application, Blueprint and JPA

- The ability to update the individual bundles in an OSGi applications

- Support for integrating OSGi and Java EE applications using SCA

- Support for Java Persistence API 2, both from Java EE and OSGi applications

- Development tooling for OSGi and JPA2 via the Rational Application Developer beta

- Good performance increase measured using the SPECjEnterprise2010 benchmark.

Sunday, May 30, 2010

The Cure for XML in Web 2.0?

Here is the abstract:

XForms offers a model-view framework for XML applications. Some developers take a data-centric approach, developing XForms applications by first specifying abstract operations on data and then gradually giving those operations a concrete user interface using XForms widgets. Other developers start from the user interface and develop the MVC model only as far as is needed to support the desired user experience. Tools and design methods suitable for one group may be unhelpful (at best) for the other. We explore a way to bridge this divide by working within the conventions of existing Ajax frameworks such as Dojo.

Interested? Let me know and we can get a review copy of the paper to you. I have talked to many clients that want to integrate their meta data driven XML dominant data to the Web 2.0 work with DOJO and run into the impedance mismatch wall. Hopefully that wall will be coming down soon.

BTW, if you'd like to attend this great conference to hear about this topic and many others on the jam packed agenda, here is a great link to use to convince your management to let you join us in Montreal.

Thursday, May 27, 2010

XQuery: Powerful, Simple, Cool .. "Demo"

First, I have an XML input file of all the downloads over a certain time period. That XML file could come from a web services, a JMS message, or be loaded from a XML database. The data looks something like:

<?xml version="1.0" encoding="UTF-8"?>

<downloads>

<download>

<transaction>1</transaction>

<userid>user1</userid>

<uniqueCustomerId>uid-1</uniqueCustomerId>

<filename>xml_and_import_repositories.zip</filename>

<name>Mr. Andrew Spyker</name>

<email>user@email.com</email>

<companyname>IBM</companyname>

<datedownloaded>2009-11-20</datedownloaded>

</download>

<!-- more download records repeating -->

</downloads>

First I want to quickly get rid of all downloads that have "education" in the filename. Next I want to split the downloads that come from IBM'ers (email or company has some version of IBM in it) vs. the downloads that come from clients. Of those groups, I want to quickly group repeat downloaders (by uniqueCustomerId). I won't include it here, but I've show how to write some of this with Java and DOM in the past. It's sufficient to say that this code is very complex (imagine all the loops through the data you'd write for each of these steps). Let's look at these steps in XQuery:

(: Quickly get rid of education downloads :)

declare variable $allNonEducationDownloads := /downloads/download[not(contains(filename, '/education/'))];

(: Split the IBM downloads from non-IBM downloads :)

declare variable $allIBMDownloads :=

$allNonEducationDownloads[contains(upper-case(email), 'IBM')] |

$allNonEducationDownloads[contains(upper-case(companyname), 'IBM')] |

$allNonEducationDownloads[contains(upper-case(companyname), 'INTERNATIONAL BUSINESS MACHINES')];

(: Get the unique IBM downloader id's :)

declare variable $allIBMUniqueIds := distinct-values($allIBMDownloads/uniqueCustomerId);

(: Get the non-IBM downloads :)

declare variable $allNonIBMDownloads := $allNonEducationDownloads except $allIBMDownloads;

(: Get the unique non-IBM downloader id's :)

declare variable $allINonIBMUniqueIds := distinct-values($allNonIBMDownloads/uniqueCustomerId);

I think the most powerful line of the above code is the "except" statement. In that one quick statement, I can quickly express that we want to take all the downloads and remove the IBM downloads which leaves us with the non-IBM downloads. I think it's quite impressive that XQuery expresses the above statements in about the same amount of lines as the English language I used to describe the requirements.

Additionally, since you are telling the runtime what you want to do instead of how you want to do it, our runtime can aggressively optimize the data access in ways that we couldn't if we had to try to understand the Java byte codes were doing on top of the DOM programming model. Also, since XQuery is functional (the above variables are final) we could span this to multi-core more safely than imperative code as we can guarantee there are no side-effects. This is why, as a performance guy, I think declarative languages are a key to the future of performance.

Back to the code. For people used to XPath 1.0 and its lack of all the built-in schema types, dealing with things as simple as dates was problematic (they were just strings). Here are a few functions that show, with schema awareness, XPath 2.0 and XQuery 1.0 are much more powerful than before:

declare function my:downloadsInDateRange($downloads, $startDate as xs:date, $endDate as xs:date) {

$downloads[xs:date(datedownloaded) >= $startDate and xs:date(datedownloaded) <= $endDate]

};

declare function my:codeDownloadsInDateRange($downloads, $startDate as xs:date, $endDate as xs:date) {

let $onlyCodeDownloads := my:onlyCodeDownloads($downloads)

return my:downloadsInDateRange($onlyCodeDownloads, $startDate, $endDate)

};

These two functions give me a quick way to look for "code" downloads within a date range. In the first function, it's very easy to understand that this functions take the downloads and returns only the subset that has a datedownloaded that is after the start date and before the end date. In the second function, you can see it's easy to call the first function. At this point, I think most Java programmers might be saying "this isn't like what I expected based on my previous work with XSLT". While XSLT is a great language for transformation (XSLT 2.0 even better), I think XQuery gets a little closer to a general purpose language with the ability to declare functions and variables in a more terse syntax.

Finally, let's cover two more important powerful features - FLOWR and output construction. Once I have sliced and diced the data, I need to output the data into a XML report. XQuery gives you a very nice way to mix XML and declarative code as shown below:

declare function my:downloadsByUniqid($uniqid, $downloads) {

for $id in $uniqid

let

$allDownloadsByUniqueId := $downloads[uniqueCustomerId = $id],

$allCodeDownloadsByUniqueId := $downloads[uniqueCustomerId = $id and (contains(filename, 'repositories'))]

return

<downloadById id="{ $id }" codeDownloads="{ count($allCodeDownloadsByUniqueId) }" >

<name>{ data($allDownloadsByUniqueId[1]/name) }</name>

<companyName>{ data($allDownloadsByUniqueId[1]/companyname) }</companyName>

<codeDownloads>

{

for $download in $allCodeDownloadsByUniqueId order by $download/datedownloaded return

<download>

<filename>{ data($download/filename) }</filename>

<datedownloaded>{ data($download/datedownloaded) }</datedownloaded>

</download>

}

</codeDownloads>

</downloadById>

};

This shows how you can create new XML documents and quickly mix in XQuery code. Some people I've talked to think this looks like scripting languages in terms of simplicity. Also, you'll see a For ($id in $uniqid) Let ($allDownloadsByUniqueId, ohters) Return (downloadsById). These three parts make up part of what people call FLOWR (and pronounce flower) which stands for for, let, order by, where, return. The FLOWR statement is a very powerful construct -- able to do all the sorts of joins of data you're used to in SQL -- but in this example I've chosen to show how it can be used to simplify code in the general case where joining data wasn't the focus. For Java people, think of it as a much more powerful looping construct that integrates all the power of SQL for XML.

In the end, I have a 200 line program that takes all the download reports and organizes them by unique IBM vs. unique non-IBM ids and produces a month by month summary. I'd be surprised if you could come up with anything shorter and more maintainable that worked with Java and DOM. I hope this "demo" encourages you to consider using XQuery in your next project where you need to work with data.

Finally, if you find people trying to convince you that XQuery isn't capable enough to be a general language, take a look at a complete ray tracer written in XQuery in a mere 300 lines of code (a real statement of XQuery's power and brevity).

PS. You can download this XQuery program here and some sample input here. You can run them by getting the XML Feature Pack thin client here. The thin client is a general purpose Java based XQuery processor that you can use for evaluation and in production when used with the WebSphere Application Server. All you need to do is download the thin client, unzip and run the below command:

.\executeXQuery.bat -input downloads-fake.xml summary.xq

Wednesday, May 26, 2010

Why the -outputfile switch in XML Thin Client is useful

I was recently working with a set of files that contained non-English Unicode characters and trying to process the data with XSLT 2.0 and XQuery 1.0. I was using the Thin Client for XML that is part of the XML Feature Pack which offers J2SE and command line invocation options for XSLT and XQuery when used in a WebSphere environment.

I did something like:

.\executeXSLT.bat -input input.xml stylesheet.xslt > temp.xml

.\executeXQuery.bat -input temp.xml query.xq > final.xml

And this resulted in something like:

... executeXSLT "works" fine ...

... executeXQuery "fails" with ...

An invalid XML character (Unicode: 0x[8D,3F,E6,8D]) was found in the element content of the document

.

An invalid XML character (Unicode: 0x[8D,3F,E6,8D]) was found in the element content of the document

.

I figured something was wrong with the encodings in the XSLT output method or the xml encoding of the files themselves or -- worse yet -- something wrong with our processor. After some quick thinking by my excellent team, they had me replace the output redirection (where my OS and console got a chance to see/mess with the data between the processor and temp.xml) with the -outputfile option (which allows the processor to directly write to the file) like:

.\executeXSLT.bat -input input.xml -outputfile temp.xml stylesheet.xslt

.\executeXQuery.bat -input temp.xml -outputfile final.xml query.xq

Problem solved. No corruption of the data.

Lesson learned: Keep all the data inside of the processor and don't introduce things (like the Windows Console) into the pipeline that won't honor (or know) the encoding.

Monday, May 3, 2010

New CEA demo videos..

The first one is doing some of the contact center widgets (like click to call then cobrowsing) on the iPhone:

Here is the coshopping between a user on an iPhone and a Desktop:

The next is a shorter and HD version of our JavaScript widget walk through:

Saturday, May 1, 2010

WWW2010/FutureWeb Conference Summary

I was able to hear some technical giants like Sir Tim Berners-Lee, Vint Cerf, Danah Boyd and Doc Searls. I was able to meet up with many people locally (including Paul Jones) as well as folks from across the world working to make the internet move into the future.

The content was as technical as it was social and political. While it's interesting to hear about the Semantic Web and HTML5 and all the cool new areas for search/data mining, it was equally valuable to hear about the impacts the Web is having on education, healthcare, and media to name a few. Also, I hear about the work of many of the conference attendees to change government processes for the better and how involved that can be with the web spanning countries in ways no other technology can/does.

Some reflections on the technical content:

1) Facebook was bashed (a lot). I actually learned that yet again, Facebook had opted me into sharing information without my understanding. The key take away from all of this bashing was that Facebook (and all web technologies) have become a critical part of our culture. The information we all are producing to create value for sites like Facebook/Twitter/etc needs to be treated with care. Marketing folks salivate at the opportunities that this community created content provides. However, just because we can share and use such data in ways that benefit our companies, we shouldn't assume we should.

2) Adobe/Apple was based (a lot). The value of open standards on the web is clear. Some of the stories shared by the panelists were quite interesting -- Talk about how the internet was just a radical idea that would never compete with the "serious networks" of the time prove how valuable standards can be and how they have and will continue to change the world.

3) There was a great presentation by Carl Malamud talking about "Rules for Radicals" that documented 10 rules to make large changes to government and technology, but the rules applied equally well - I can apply them to working within a large corporation. Note that while take aways #1 and #2 got a lot of press, the fact is there were many iPad's, Mac Books and Facebook borne meetups. Carl's presentation showed that we need to work to affect change within these communities. Here is a quick video summary of the rules.

4) I've had it on my TODO list for some time now to look at the building blocks of the Semantic web. I needed to understand how RDF/RDFa and SPARQL relate to XML and XQuery. I'm starting to form some opinions now based upon what I've heard at the conference and the work I've done this week to play with the technologies. I can say with certainty that this Web 3.0 (the web for machines vs. Web 2.0 which was the web for human) and its related technologies - RDF, SPARQL are not going away. I can also say that RDF/SPARQL doesn't compete with XML/XQuery. I can see that we'll need to bridge the gap between these worlds as we look to unleash not only the XML stored in many enterprises but also relational data. We'll also need to do this quickly as this world is moving fast and those people who don't embrace Web 3.0 will be as left behind as those that are still moving towards Web 2.0. An example of this speed that impressed me was the creation of a Facebook Open Graph Protocol vocabulary that was peer edited during a session on Thursday but then live by Friday. Amazing.

5) Twitter is a business tool. I've known this for some time and had success stories, but given the audience of this conference (passionate web technologists) I saw the value of Twitter magnified by at least an order of magnitude. Every academic attendee was communicating via Twitter. I used it to find the IBM attendees and collaborate with them in ways I'm sure I would have missed without Twitter. I used it to meet people I've never met before (even led to a lunch out with Doc Searls and Kathy Gill and another with a local company that is working with SIP technologies). If it wasn't for twitter, I'd say the value of the collaboration at this conference would have been decreased by that same order of magnitude. Another funny story that proves Raleigh is well connected was a fight between two bars on Twitter that broke out trying to earn our patronage for a dinner on the town. If you're a business that isn't paying attention to Twitter are you losing the cost of a few beers or worse?

I'm sure there were more take aways I'll remember, but for now that's a good starting point. If you were at Future Web and had other big take aways, post them in comments.

PS. I got to meet a bunch of great local XML/XQuery folks at the XQuery meet-up I organized. I look forward to collaborating with these folks locally in the future.

Friday, April 30, 2010

Here comes WebSphere CloudBurst 2.0

Just over a year ago, IBM announced the availability of the initial version of the WebSphere CloudBurst Appliance. Today, an announcement signals the coming availability of WebSphere CloudBurst 2.0, and that brings the major release count up to three in a period of about 12 months (the release of 1.1 came at the end of last year).

You can read the announcement for yourself, but here is a quick overview of the new features and enhancements delivered in the latest version:

- WebSphere Process Server support: You can now provision fully functional, virtualized WebSphere Process Server environments using WebSphere CloudBurst. This adds to the existing support for WebSphere Application Server, and the beta and trial versions of WebSphere Portal and DB2 respectively.

- Multi-image pattern support: In previous versions of WebSphere CloudBurst, all patterns mapped to a single virtual image. This meant your custom patterns could only contain parts (or nodes) from a single product. Now you can build patterns that contain parts from multiple different images. This allows you to represent diverse application environments, for instance, one that includes WebSphere Application Server, WebSphere Process Server, and DB2 components, as a single pattern. Of course, this also means installing and initializing these different product components becomes as simple as deploying a single pattern.

- Dynamic system management: During the lifetime of an application environment, it is commonplace to add additional capacity. Specifically for WebSphere environments, this often means adding more nodes and application servers into your landscape. WebSphere CloudBurst 2.0 makes it simple (click of a button) to add more nodes and application servers to a virtual system you previously deployed. Using this new capability, you can quickly scale up your application environment to meet the changing demands of its users. Conversely, you can scale down the environment and remove unnecessary nodes with the simple click of a button as well.

- Intelligence for the runtime: I always talk about the WebSphere intelligence the appliance delivers in terms of deploying and constructing WebSphere application environments. The addition of the WebSphere Application Server Hypervisor Edition Intelligent Management Pack means this intelligence starts to make its way into the runtimes of your application environments. Use the new intelligent management pack to enable a policy-based approach to managing your applications. You can enforce application health actions, govern application response times, and even manage the rollout of new versions of your application with no service disruption.

- New Red Hat WebSphere Application Server Hypervisor Edition: The WebSphere Application Server Hypervisor Edition is a virtual image that includes everything from the operating system all the way to the WebSphere Application Server, pre-installed and pre-configured. Initial versions of this virtual image shipped with Novell SUSE Linux Enterprise Server. Staring in WebSphere CloudBurst 2.0, users can use a new WebSphere Application Server Hypervisor Edition virtual image for VMWare ESX that packages the Red Hat Enterprise Linux Server operating system.

As WebSphere CloudBurst marches forward with new releases, a theme becomes apparent: Give users a choice. What do I mean? Well, just look at where WebSphere CloudBurst stands with the 2.0 release:

- You can use WebSphere CloudBurst to provision environments to VMware ESX, PowerVM, and z/VM hypervisor platforms

- You can use WebSphere CloudBurst to provision WebSphere Application Server, WebSphere Process Server, DB2, and WebSphere Portal

- You can run your virtualized WebSphere application environments on SUSE, Red Hat, AIX, and zLinux operating systems

Want to see more about the 2.0 release? Check out my new video. This much is inarguable: For running WebSphere application environments in an on-premise cloud, nothing comes close to the capabilities of WebSphere CloudBurst.

CEA Impact Sessions

Enabling Cobrowsing and Coshopping on your website - 2040A Tue, 4/May 10:15 AM - 11:30 AM Venetian - San Polo 3506

Adding Rich Interaction Support to your Enterprise Application - 2272A Wed, 5/May 10:15 AM - 11:30 AM Venetian - Lido 3103

Also, we have a lab on Enabling Coshopping and Two Way Forms on your Web Applications with CEA - 2027A Tue, 4/May 04:45 PM - 06:00 PM Venetian - Murano 3304

Finally, here is a less than 3 minute video showing cobrowsing and the new mobile widgets we have made available and will be demoing at Impact here as a tech preview

WebSphere Application Server Feature Pack for Dynamic Scripting

Today we announced a new feature pack for WebSphere Application Server based on WebSphere sMash. This new feature pack delivers the sMash programming model for use on entitled / current subscription WebSphere Application Server V6.1 and V7.0 servers.

Complete details can be found in the IBM.com announcement letter.

When the Feature Pack becomes electronically Generally Available, downloads will be available on the official IBM.com web site for WebSphere Application Server Feature Pack for Dynamic Scripting.

Also being made available through Project Zero, the sMash Enterprise Packager allows WebSphere Application Server V7 to deploy and manage sMash applications through the administrative console as an EAR file. Read more info about this and download the sMash Enterprise Packager on ProjectZero.org.

Find out more about this new Feature Pack and more at IBM Impact 2010.

Based on technology from WebSphere sMash V1.1.1, the feature pack for dynamic scripting provides support for dynamic scripting languages including PHP and Groovy all the while allowing you integrate with AJAX, REST, ATOM, RSS, etc. There is also a resource model as part of the included Zero programming model that simplifies the creation of RESTful services. Want a quick way to create a Web 2.0 application in these languages .. then give this feature pack a try.

Thursday, April 29, 2010

Video Blogs on Impact 2010 Sessions Next Week

I will be presenting on WebSphere XML Strategy. I will be presenting a session on the WebSphere Application Server Feature Pack for XML talking about usage scenarios, how to use the feature pack, and best practices (Sessions 1635A/B on Monday and repeat Thursday). I will also be providing a general education session on XPath 2.0, XSLT 2.0 and XQuery 1.0 (Session 1634 on Tuesday) focused on basic education as well as noting whats new with the standards - with a cool give away! I will be assisting a lab where you can get hands on experience with the XML Feature Pack and the Rational Application Developer tools (Session 1606 on Monday). Stop by any of these session or hit me up on twitter (@aspyker) if you have any questions about XML or data strategy within your enterprise.

Along with the XML Feature Pack and XML strategy talks, I'll be participating in a SOA and BPM Performance update (1321) talking about SPEC SOA and multiple panel discussions around the values of the application server, performance, and feature packs.

You can hear me talk about the sessions here:

Some other videos about sessions next week are available as well.

Erik Kristiansen on RESTful, dynamic scripting, and OSGi programming models:

Lan Vuong on extreme transaction processing and elastic application architectures:

Thursday, April 8, 2010

Looking for XPath/XSLT/XQuery Education?

Ken and I have been collaborating recently on enabling the hands-on in-depth XSLT/XQuery classes offered to use the IBM Thin Client for XML with WebSphere Application Server V7.0. Now students with the feature pack can quickly configure Crane's exercises to utilize the latest XSLT/XQuery support from IBM.

The three upcoming publicly-subscribed deliveries for XSLT/XQuery are:

West-coast North America: April 26-30, 2010 - San Francisco area

East-coast North America: May 10-14, 2010 - Ottawa, Canada

Europe: June 7-11, 2010 - Trondheim, Norway

Ken travels the world teaching a number of XML-related classes both privately and publicly. He is willing to consider teaching anywhere and he welcomes anyone to contact him regarding a possible private or public class of any of his material. Ken has taught and offers other classes. You can see them here.

Wednesday, April 7, 2010

XML Feature Pack 1.0.0.3 Available

We just released the 1.0.0.3 version of the XML Feature Pack that has two major new features (as well as some small bug fixes). The two new features are:

XQuery Schema Awareness

In the initial release we had Schema Awareness for XSLT 2.0. In this release we add similar function to XQuery. Specifically this means we started to support the optional XQuery 1.0 features of schema import and schema validation. With these features you can use your own type information in XQuery programs. A common scenario would be looking for all addresses in an input document, regardless of they were "billingAddress" or "shippingAddress". Programming based on type information is a powerful concept that leads to more flexible implementations. Also, validation allows you to validate incoming documents, xml trees and whole output documents. This allows for greater reliability in your XML processing.

Debugging support for XSLT 2.0 under Rational Application Developer (RAD) for WebSphere

Previously with RAD you could debug XSLT 1.0 stylesheets. With this new release of the XML Feature Pack and with RAD 7.5.5.1 you can debug XSLT 2.0 stylesheets. This isn't just about moving to a newer stylesheet level. With all the changes in the data model and advanced new concepts like grouping, there are many improvements in visualization with debugging over the XSLT 1.0 debugger.

What is also interesting is that this is a converged debugger. While there are other XSLT 2.0 debuggers out there, they only work on the stylesheet itself. With this support in RAD, you can debug not only the stylesheet, but also the Java code in your web application that invokes the XSLT engine along with any Java extension functions you might have. If you are using XSLT 2.0 in the application server, this is the tool you want for debugging end to end.

I hope to do a video demo of this Rational Application Developer functionality. Imagine setting breakpoints in XSLT as well as Java and being to jump between them. Anyone interested in seeing such a video demo?

Have fun with the new functions!

Sunday, April 4, 2010

Options for WebSphere Application Server Scripting

- AdminControl: Use to run operational commands.

- AdminConfig: Use to run configurational commands to create/modify WAS configuration.

- AdminApp: Use to administer applications.

- AdminTask: Use to run administrative commands.

- Help: Use to obtain general help.

WAS provides a number of aids to developers and system administrators for the development of wsadmin scripts. Different options that can be leveraged in developing wsadmin scripts are explained below.

WAS V7 Script Libraries (new in v7 .. supported)

WebSphere Application Server V7.0 includes script libraries that can simplify the use of these objects.

Script libraries can be used to perform a higher level of wsadmin functions than can be done using a single wsadmin command. Only a single line from a library function is needed to perform complex functions. Each script is written in Jython, and is often referred to as “the Jython script”. The script libraries are categorized into six types (Application, Resources, Security, Servers, System) and the types are further subdivided into application and utilities. See the WAS V7 Administration and Configuration Guide chapter 8 for additional details. The script libraries are located in WAS_INSTALL_ROOT/scriptLibraries directory. These libraries are loaded when wsadmin starts and are readily available from the wsadmin command prompt or to be used from the customized scripts.

Command assistance ( supported)

The command assistance feature in the administrative console was introduced in WAS V6.1 with limited scope in function. The command assistance feature has been broadened in V7.0. When you perform an action in the administrative console, you can select the View administrative scripting command for last action option in the Help area of the panel to display the command equivalent. This command can be copied and pasted into a script or command window.You also have the option to send these as notifications to the Rational Application Developer V7.5, where you can use the Jython editor to build scripts.

wsadminlib.py ( 'as-is' see note below)

Another resource for WebSphere System Administrators for scripting is the wsadminlib.py script library.

wsadminlib.py wsadminlib.py is a large python file containing hundreds of methods to help simplify configuring the WAS using scripting. A wide variety of methods have been developed. These methods perform tasks such as creating servers, starting servers, creating clusters, installing applications, proxies, core groups, core group bridge, dynacache, shared libraries, classloaders, replication domains, security, BLA, JDBC, etc. Please note that wsadminlib is provided on an 'as is' basis under the IBM DeveloperWorks license agreement. It is not a supported product. The underlying wsadmin calls made by the scripts are however supported by IBM.

Happy Scripting!

Wednesday, March 31, 2010

Programming XML Across Multiple Tiers

While the sample is there, with source code, in the XML Feature Pack, we don't explain why we coded the sample the way we did. In this new developerWorks article (Programming XML across the multiple tiers: Use XML in the middle tier for performance, fidelity, and development ease), we go into detail why for simplicity, performance, and flexibility reasons we coded the sample the way we did.

The article is worth a read. It will walk you through the new features in the XML Feature Pack and JDBC 4.0 that allow an end to end native XML programming model across the XML Feature Pack and an XML database. We hope to expand this article over time to cover more advanced concepts when working with XML databases.

Finally, here are two quick videos that show how to get the sample working with DB2 pureXML and Apache Derby.

DB2 pureXML (Part 1/2)

Direct Link (HD Version)

Apache Derby (Part 2/2)

Direct Link (HD Version)

Tuesday, March 30, 2010

XQuery as a replacement for VBScript?

In this presentation, I showed how I replaced a process we had internally using Excel and VBScript with an online application using XQuery running on the XML Feature Pack. I also showed how others have done non-query based applications using XQuery (like the XQuery Ray Tracer) proving that XQuery is a full language.

I wonder if others out there also believe that XQuery is a good language to replace VBScript and "programs" currently locked in Excel documents?

Monday, March 29, 2010

IBM WebSphere Application Server V8.0 Alpha

Building upon the capabilities of our previous releases, some of the Alpha features include:

- Key portions of Java™ Enterprise Edition 6.0 specifications

- Increased developer productivity

- Simplified product install with integrated prerequisite and interdependency checking

- Enhanced security and governance capabilities

- JPA L2 cache and JPA L2 cache integration with DynaCache

- High Performance Extensible Logging

Our architects, designers, engineers, testers, information developers, and user experience professionals are eager to participate with you in the Alpha forum, discussing what's new, learning about your experiences with all aspects of the product, and answering questions.

Also, the WebSphere Customer Experience Program (CEP) offers opportunities for interactive sessions with our development teams, including demos of potential new features and opportunities to provide feedback that we can use to drive improvements into each version of the product.

More details about the Alpha program, how to download the product, and the CEP program can be found here:

https://www14.software.ibm.com/iwm/web/cc/earlyprograms/websphere/wsasoa/index.shtml

And, here's a link to the Alpha forum:

http://www.ibm.com/developerworks/forums/forum.jspa?forumID=2180&start=0

We're looking forward to hearing from you about your experiences with the IBM WebSphere Application Server V8.0 Alpha.

Enjoy!

Wednesday, March 17, 2010

More OSGi goodness

First, install the feature pack (see here) and start the application server, then at the shell command line navigate to:

[AppServerHome]/feature_packs/aries/bin

There is a script in there called osgApplicationConsole.sh (or .bat), run it and you should get a "wsadmin>" prompt. Use the list() at the prompt and it will show you ... nothing. That's fine, you need to install and start an application before you can see anything.

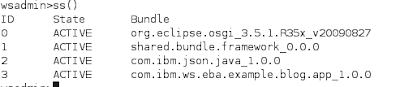

Install and start the Blog Sample (as described here), then try list() again, you will see something this:

Two frameworks are listed, 'shared bundles' and the Blog application. Connect to the first like this:

wasadmin>connect(0)

Use the ss() command to look at what is in it:

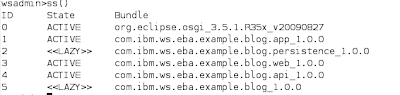

You don't have to disconnect from a framework explicitly, so to look at framework 1 (the Blog application) just connect to it:

wsadmin> connect(1)

then ss() shows the blog sample bundles.

This may by now be looking slightly familiar to anyone used to the Equinox OSGi console. The difference is that WebSphere is partitioning the space into separate application frameworks which you can look at individually - nice feature if you have a lot of applications. By the way, if you forget which framework you are connected to, list() at the wsadmin> prompt will tell you.

Other console commands can be found in the documentation, or by using 'help()' at the wsadmin> prompt. Have fun!

Friday, February 26, 2010

The pain of XML in Web 2.0

I took the download sample I described here, and tried to visualize the data to Web 2.0 webpages. The data format of the XML of interest is:

First, I looked at the DOJO bar chart code and did something like this in a server side XQuery program that generated HTML with the following under JavaScript:

This works as XQuery can return sequence of primitive types and in this case, I'm just returning a string and inserting it inside of the JavaScript code that expects value/text values. But what if I want to have a REST endpoint serve up XML directly and have the browser consume it?

DOJO DataGrid can read from a DataStore which can be hooked to an XmlStore. This means I can use a browser side control to read from my server side XML. All seems good until you get into the details. Here are snippets of the code to make this "work":

What are the some of the issues with this? First, the XmlStore has to map to a simpler format for the DataGrid to understand the XML data. That is why I had to manually tell the XmlStore to promote all the attribute values to similarly named element names. Nicely, the XmlStore supports allowing the ability to drill down to something other than the root item for the data, but it really just allows you to pick the name of an element (you'll see I specified "month"). The second problem is that for any complex industry specific data, likely that wouldn't be sufficient. What if I had multiple month elements at different parts of the XML tree? I'd end up getting a table that combined months that meant different things. What I'd really want is XPath as the root selector. Third, even though the Store abstraction is nice for handling multiple data formats, if I wanted data to be combined from different parts of the XML tree or multiple trees, what I really would like is XPath from the DataGrid formatter function itself.

Assuming this might be easier in the other very popular library for JavaScript query, I went off an investigated jQuery. I quickly found articles that talked about jQuery and XML. I patterned the next part of the article after this example. So, rewriting, I ended up with:

Now, with jQuery, I'm actually able to do a little more "native" xml query. You'll see that I can access attributes directly. You'll see that I can navigate only to the months or the monthByMonthDownloadStats. However, as someone that knows XQuery, this syntax seems very unnatural (I'm sure it's very clear to JavaScript and/or CSS writers). Unnaturalness aside, this seems more verbose. In XQuery I can write this like:

With this I get all of the same benefits that jQuery has (plus more - I'm almost sure jQuery wouldn't support the rich Functions and Operations of XPath 2.0 or any mixed XML content common in document centric XML approaches). XQuery mixes the construction of the content with the query of input much better in my opinion (I believe if we showed date comparison for example you'd see a worse comparison). Of course the benefit of jQuery over XQuery is XQuery doesn't run in the browser. I had to run the previous XQuery sample on the server. That is a pretty big benefit.

I think the summary of all of this, if you stayed with me this long, is that Web 2.0 technology in the browser isn't really ready to handle the complex XML documents that exist within most enterprises. This means if you want to marry Web 2.0 with the enterprise XML data, you'll need to write data conversions essentially extending the presentation tier across the browser and middle tier that simplify the data or use feature like the Web 2.0 Feature Pack to do this for you. Also, you'll need to learn two languages (arguably three if you consider jQuery a language) and programming styles when dealing the with XML data.

Given I look at WebSphere XML Strategy, I'm not sure I'm happy with this answer. I am currently looking towards other solutions to this issue. Given I'm rather new to Web 2.0, feel free to point out other things I didn't consider in the Web 2.0 space for XML processing (outside of XForms of course).

Monday, February 22, 2010

XPath, XSLT 2.0 and XQuery 1.0 in five minutes

The thin client for the XML Feature Pack allows you to use the XPath 2.0, XSLT 2.0, and XQuery 1.0 runtime in your client applications of the application server using the same API's as when running in the application server. Before, you could get the thin client by installing the XML Feature Pack on top of the application server. Now, we've made the thin client separately downloadable which makes prototyping very simple.

Here are the links shown in the demo:

Direct link to download the thin client, Demo files

XML Feature Pack Thin Client Demo

Direct Link (HD Version)

Please note that the thin client is only supported on Java 1.6 JVM's.

Wednesday, February 17, 2010

Simple XQuery execution in Eclipse using XQDT/XML Feature Pack

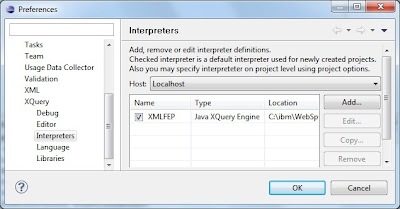

After installing, here is how to setup the right things to make it call the XML Feature Pack:

1. Setup the interpreter to point to the XML Feature Pack thin client (note you can obtain the thin client from here for evaluation, or obtain it from a XML Feature Pack installation)

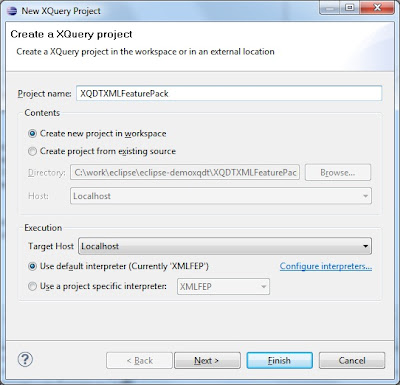

2. Create a new XQuery project

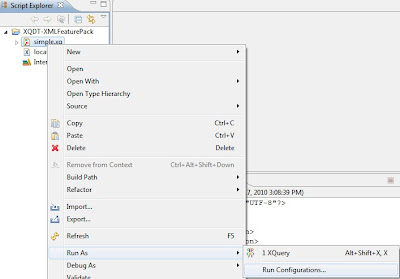

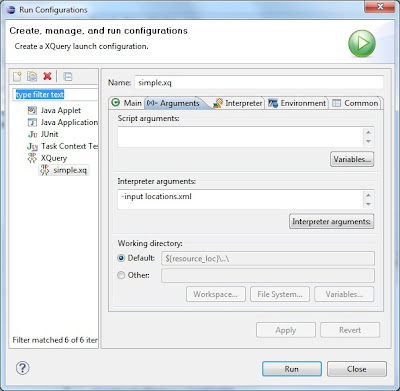

3. Setup the run as XQuery options to set the input file

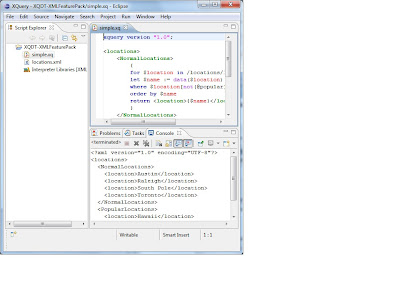

4. Run and view the output

This will get you to a place where you can quickly edit and run XQuery programs. It won't allow you to debug and doesn't integrate with your Rational Application Developer projects, but for quick edit/run/fix development of XQuery it does a decent job. Its worth noting that this is something I discovered as working and given you get this from Eclipse/open source, there is no IBM support. However, if you give it a try and have some feedback, post it on the forum and I'll get it back to our tooling teams.

In the spirit of another big post, here are some images that show these steps, using the locations.xml and simple.xq that I used in this previous post.

To setup the interpreter to point to the XML Feature Pack thin client, load up Windows -> Preferences and navigate to XQuery -> Interpreters and click Add.

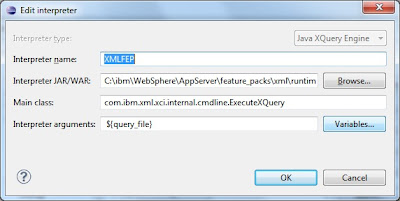

The settings to put into the dialog are:

Interpreter type: Java XQuery Engine

Interpreter name: XMLFEP

Interpreter JAR/WAR: C:\ibm\WebSphere\AppServer\feature_packs\xml\runtimes\com.ibm.xml.thinclient_1.0.0.jar

Main class: com.ibm.xml.xci.internal.cmdline.ExecuteXQuery

Interpreter arguments: ${query_file}

And it looks like this:

Next you need to create an XQuery project. It would be nice if you could use this functionality outside of an XQuery project, but I haven't been able to get that to work yet. You can create a new project by right clicking the project window New -> Other -> XQuery -> XQuery Project. Give it whatever name you want. Make sure you pick the XMLFEP (or whatever you named it) as the default interpreter. This looks like this:

Next, copy the simple.xq and locations.xml into your project and refresh. Once you have done that you should be able to right click on simple.xq and do Run As->Run Configurations.... That looks like this:

Once you're in there, navigate to Arguments. You can add any command line options here, but most importantly you want to add the -input parameter and point it to the input file (locations.xml in this simple sample). That looks like this:

Once you have this setup, you can Run the XQuery file in the project by right click Run As->XQuery or simply Control-F11. If it all is setup right, you'll see the output in the console window. That should look like this:

Update 2010-02-22: Note that if you have Java 1.5 on your path, make sure you replace it with Java 1.6. Otherwise you'll get an error about invalid class formats or magic numbers since the thin client only supports Java 1.6 JDK's. You can tell if your system have Java 1.5 on the path by opening a command prompt or shell and typing java -fullversion. Hopefully XQDT at some point will allow you to control what Java the execution is run on instead of defaulting to the global path version of Java.

Update 2010-10-06:

XQDT has moved to WTP Incubator at Eclipse. The XQDT team just release a new milestone, which in particular brings compatibility with the latest Eclipse Helios (Eclipse 3.6). For more details changes, go look at the New and Noteworthy page on the Eclipse web site:

http://wiki.eclipse.org/XQDT/New_and_Noteworthy/0.8.0

To install the latest XQDT build from Eclipse, make sure to stop using the old XQDT update site. Instead use the Eclipse update site:

http://download.eclipse.org/webtools/incubator/repository/xquery/milestones/

Friday, February 12, 2010

Try out WebSphere's OSGi Application Feature

I will refer to your WebSphere home directory as WAS_HOME throughout this post. I ran through this using the free-for-developers version of WebSphere running on Ubuntu, so there may be a slightly Linux-y flavour; I'll document the 'assume nothing' Ubuntu install here. Everything should, of course, work on any supported WAS platform.

The Blog sample

The Blog sample is an OSGi Application that demonstrates the main concepts and many of the benefits of assembling and deploying an enterprise application as an OSGi Application. It comprises four main bundles and an optional fifth bundle, the relationship between the bundles is shown below:

The blog sample demonstrates the use of blueprint management, bean injection, using and publishing services from and to the osgi service registry, using optional services and the use of java persistence. In the main application, supplied as an EBA (enterprise bundle archive), the four bundles are:

The blog sample demonstrates the use of blueprint management, bean injection, using and publishing services from and to the osgi service registry, using optional services and the use of java persistence. In the main application, supplied as an EBA (enterprise bundle archive), the four bundles are:- The API bundle - describes all of the interfaces in the application

- The Web bundle - contains all of the front end (servlet) code and the 'lipstick' (css, images)

- The Blog bundle - the main application logic. This bundle publishes a 'blogging service' that the Web bundles uses.

- The persistence bundle - the codes that deals with persisting objects (authors, blog posts ..) to a database (Derby in this case). The persistence bundle supplies a service for this which is used by the Blog bundle; the persistence service in this implementation uses JPA, with OpenJPA as the JPA provider.

- The final bundle is an upgrade to the persistence service, it contains an additional service that will deal with persisting comments as well as authors and blog posts.

Running the Blog Sample

The first steps in running the sample are to set up some data sources and create the database that the sample will use. There are instructions on how to do both in the Readme.txt file which can be found in WAS_HOME/feature_packs/aries/samples/blog; when you run the blogSampleInstall.py script use the 'setupOnly' option which will just create data sources. I'm going to step through the rest of the installation using the WebSphere Admin Console.

Start up WebSphere and point your web browser at the Admin Console, if you are running on a local machine and have not set up administrative security you will find the console at http://localhost:9060/ibm/console. Before going any further check that the data sources were set up properly by navigating to Resources->JDBC->Data sources, you should see something like this:

In the next sections I will work through installing the sample, starting with the bundles that it depends on.

Deploying bundles by reference

The Blog sample uses a common JSON library; while it could be deployed as part of the application the Blog sample illustrates how common libraries can be installed to the new WebSphere OSGi bundle repository and provisioned as part of the installation of an application that requires it. So the first thing we do is add the common JSON library to the WebSphere bundle respository. Navigate to Environment->OSGi Bundle Repositories->Internal bundle repository, the repository will be empty if you are using a new installation.

Click on 'New' to add a new bundle, on the next screen add the asset WAS_HOME/feature_packs/aries/InstallableApps/com.ibm.json.java_1.0.0.jar. Click 'OK' and then save the configuration, you should see this screen:

Creating the Blog Asset

Installing an OSGi Application through the Admin console is accomplished in two steps, described in this section. The blogSampleInstall.py script mentioned above illustrates the underlying wsadmin commands for a scripted install. The first step is to add an EBA (enterprise bundle archive) archive as an administrative asset, the .eba extension just indicates that this is an OSGi Application. Navigate to Applications->Application Types->Assets. Click 'Import' and add WAS_HOME/feature_packs/aries/InstallableApps/com.ibm.ws.eba.example.blog.eba. After saving you should see this:

Creating the Blog Sample Business Level Application

The second step is to to create an application which uses the EBA asset. Navigate to Applications->Business Level Applications, add a new application called Blog Sample:

After you have added the application you must associate it with the Blog sample asset, click on the sample and add com.ibm.ws.eba.examples.blog.eba under Deployed Assets.

As usual, save the configuration.

Start and run the Blog Sample application

At this point everything is in place and ready to run the application. From the Business Level Application screen, select the radio button beside the Blog sample and click start. If the sample starts as expected then point your web browser to

http://localhost:9080/blog, and you will see this:

With the blog sample running you will be able to add authors and posts and see that they are persisted to the database. Here is my first post to the Blog sample:

There isn't a great deal of functional code in this 1.0.0 version of the sample but a 1.1.0 version of the blog.persistence bundle is provided which adds a functional service to enable you to add comments to blog posts. We'll now illustrate how to update an application to add a new service by moving from version 1.0.0 of the blog persistence bundle to version 1.1.0 which contains the new service.

Changing the bundles that the Blog Sample application uses

First you will need to add the blog.persistence_1.1.0 jar to the internal bundle repository. This means repeating the same steps as for adding the JSON jar above. The path to the archive is WAS_HOME/feature_packs/aries/InstallableApps/com.ibm.ws.eba.example.blog.persistence_1.1.0.jar. Add it to the internal bundle repository and save the configuration.

Now you need to allow the application to use the new bundle. To do this, select the blog sample asset by navigating to Applications->Application types->Assets and clicking on com.ibm.ws.eba.examples.blog.eba. Scroll down to close to the end of the next screen where you will find this link:

Clicking on the 'Update bundle versions...' link will take you to this page:

Click on the the drop down arrow to the right of the line for the persistence bundle, you will be offered a choice of using the 1.0.0. or the 1.1.0 bundle. Choose 1.1.0 and follow through the preview and commit screens. You will need to restart the Blog application (from the Business Level Application screen) to make it use the new bundle, after that, navigating to http://localhost:9080/blog should show you the blog application with a new link to add comments. Unfortunately, it doesn't. This is what you will see:

This turns out to be entirely my mistake. In the last minute scramble to get the sample into the Beta delivery I didn't notice that some changes had been made to the JPA support had been made at the same time. The consequence of those changes is that my MANIFEST.MF requires an additional line. This is an easy fix and in the next section I'll describe how to make it.

How to modify the Blog Sample

All of the sample source code and Ant build files can be found under WAS_HOME/feature_packs/aries/samples/blog. Before making any other changes you should modify the build.properties file in this directory so that the first line refers to your WAS_HOME, you will need this file to build code with later on.

The best way to fix the problem with the MANIFEST.MF is to create another version of the persistence bundle, it should be a 1.1.1 version since the fix is very small. To start with, create a new directory under WAS_HOME/feature_packs/aries/samples/blog called com.ibm.ws.eba.example.blog.persistence_1.1.1, then copy the entire contents of com.ibm.ws.eba.example.blog.persistece_1.1.0 into it. Two files need to be modified, the META_INF/MANIFEST.MF needs to be changed to add the pink highlights shown below:

be very careful with the Meta-Persistence: line, it must have a space after the colon and the code will not compile if it doesn't. The second file that needs a small modification is the build.xml file, the project name needs to end 1.1.1, not 1.1.0.

After making the changes, run the build.xml file in your new 1.1.1 directory, like this:

ant -propertyfile ../build.properties -buildfile build.xml

This will create the archive target/lib/com.ibm.ws.eba/blog.persistence_1.1.1.jar. To install the new archive, go back to the WAS console and repeat the steps for adding it to the internal bundle repository and making the Blog Asset use it. Finally, restart the Blog application, point the web browser to the Blog and hit refresh. Et voila! A new link has appeared so that comments can be added to the post. Here is a screen shot with a comment added:

How does it work?

This Blog sample is designed to demonstrate how easy it is to change bundles and how to use optional services. To make this work we had to think about how to design the sample to be able to use the additional comment service from the start. This isn't really unrealistic, how often have you had a complete design in mind but not had time to implement the whole thing before delivering it? In this case we stopped short of delivering the service in the first version but we were able to supply it as an upgrade with an almost undetectable interruption to the service.

The sample is designed so that the bundles can be maintained completely independently of each other - I want the ability to upgrade one bit at a time. This might be overkill for an application of this size but the principle applies to applications of any complexity.

The other thing I have rather glossed over is that I didn't change the database, again the database had to have the right structure for the comment service from the start. However, this follows fairly naturally from designing the application to expect to be able to use commenting.

The best way to understand what is happening when the application is running is to look at the META-INF.MANIFEST.MF and OSGI-INF/blueprint/blueprint.xml files for each bundle. As the code is fairly simple, it's easy to follow through to the Java code and see where properties are injected by the container as specified in the application blueprint.

In the next revision of the Beta release I will fix the mistake in the MANIFEST.MF and will also correct a horrible anti-pattern that I introduced in trying to keep the persistence blog layers separate. In fact, I'll buy a beer for anyone that can see it and send me a good fix for it!