Friday, December 12, 2008

Service Component Architecture GA

In addition to the code download, here are some other useful links:

SCA Feature Pack InfoCenter

SCA Feature Pack Release Notes

SCA Feature Pack developerWorks Roadmap (available here soon)

As an early Holiday Present for developers, Rational has announced an open beta program which demonstrates among other things, a new visual composite editor for Open SCA. Details about the program can be found here.

Happy coding!

Wednesday, November 19, 2008

Properties File Based Configuration for WebSphere

The following commands are provided to perform properties file based configuration:

1. extractConfigProperties : To extract configuration of entire cell or a specified configuration object's properties to a file.

2. applyConfigProperties : To apply properties specified in the properties file to the system.

3. validateConfigProperties : To validate a properties file before applying the properties file to the System.

4. deleteConfigProperties : To delete properties specified in the properties file from the system.

5. createPropertiesFileTemplates: To create template properties files to use to create or delete specific object types.

For more information can be found here:

http://publib.boulder.ibm.com/infocenter/wasinfo/v7r0/index.jsp?topic=/com.ibm.websphere.base.doc/info/aes/ae/rxml_7propbasedconfig.html

Tuesday, November 18, 2008

Customer feedback in Barcelona

|

| From 2008-November-Barcelona |

Yes, there are worse things than spending a week in Barcelona. I've never been there before, and I definitely needed at least another week to see everything I wanted to see.

|

| From 2008-November-Barcelona |

I was there to run our Customer Feedback Program, which is a new track that debuted last spring at Impact 2008. The idea is that at most IBM conferences, the attendees naturally spend most of the week listening to IBMers speak. Where are we headed? What's new in the products? What's our newest three letter acronym? We wanted to turn this around and have sessions where the customers do the talking and the IBMers do the listening.

|

| From 2008-November-Barcelona |

These are small roundtable sessions with a couple of IBMers (usually a technical architect, designer, and/or product manager) and around four customers. After the sessions, we consistently hear from customers that they are the most valuable sessions of the week from their perspective, and the IBMers always walk away with great feedback about our products.

|

| From 2008-November-Barcelona |

We're trying to make these customer feedbacks sessions a staple for all of our conferences - so the next time you attend a conference (like Impact 2009 at the Venetion in Las Vegas) look for the feedback sessions. It's time well spent.

And if you ever get an opportunity to go to Barcelona, take it!

But watch out for the pigeons...

|

| From 2008-November-Barcelona |

Speaking tomorrow at the Charlotte WUG

Friday, November 14, 2008

Centralized Installation Manager (CIM)

The CIM program can be installed into a v7 ND Deployment Manager, and then can easily install and maintain the workstations in a WebSphere cell. An administrator can remotely install or uninstall product packages and maintenance to specific nodes directly from the administrative console without having to repetitively log in and perform these tasks on each individual remote machine. CIM operations can be done through its Graphical User Interface or can be scripted for full automation. Since CIM only previously shipped with XD-6.1, almost no "ND" WebSphere customers currently know about it. For details about CIM and how you can leverage to simplify and automate your enterprise servers please see the WebSphere v7 InfoCenter CIM information. If you are an ND cell administrator then CIM is something you need to seriously consider, it is certainly a way to significantly simply your day-to-day operations.

Thursday, November 13, 2008

Videos available that quickly walk you through WAS V7 functionality

I just found out that there is a series of about 50 videos available in the WAS V7 InfoCenter that cover many of the new features of WAS V7. I have watched a few of them and find them very clear and short enough to consume on breaks between other work.

Go here to find the IBM Education Assistant videos for WAS V7.

Saturday, November 8, 2008

More information on the TPC SOA benchmark

On SOA, Mike explains, "TPC's SOA benchmark is only in the proposal stage, but the tentative plan is to focus on common industry-accepted portions of SOA infrastructure, mainly Web services, the enterprise service bus, and business process choreography. As advanced SOA practices become more standard in the industry, TPC will expand the benchmark to incorporate additional SOA infrastructural services".

I'm excited to work within the TPC to continue driving this SOA performance benchmark to reality. There are no other standard benchmarks that tackle these common SOA infrastructural components. If you have interest in seeing such a benchmark or comments on how it would help you, please post a comment. I'll relay them back to the TPC.

Wednesday, November 5, 2008

WebSphere Application Server V7.0 What new for Security

Our new WebSphere Security Domains provide greater granularity management of security controls by offers more flexibility in configuring security under centralized management. WebSphere Security Domains is designed to allow for a separation of WebSphere administrative security and your business application security. For example, Business applications can be configured to use your external LDAP registry while the WebSphere administration can use your Federated Repository’s file base registry containing internal user. Further granularity can be further expanded between business applications by allowing separate security configuration between business applications using new security configuration scoping to a cell, a cluster, or application server level. This new level of security granularity provides significant new flexibility in the security mechanism implemented across various application portfolios.

Our new WebSphere Security Auditing feature offer enhanced complicacy and auditing capabilities. The auditing capabilities allow tracks a number of security related events. For Example of administrative actions that can be logged include: security configuration changes, key and certificate management, and access control policy changes. Business applications can be audited to record a number of security events such as authentication or authorization attempts. This new security logging and auditing capability ensures accountability for administrative actions. In addition, we offer a temper proof audit file to prevent any tempering of recorded audit data. For z/OS customers, the generated Auditing data optionally intergrades with the z/OS System Management Facility leveraging by recording the WebSphere Auditing data as part of the Auditing Type 83 records.

Our WebSphere Secure Proxy has been become a lot easier and more. The WebSphere Secure proxy offers a new DMZ Hardened Proxy profile option. The DMZ Hardened Proxy improves security by minimizing the number of external ports opened, loading only signed JARs, and running as an unprivileged user when binding to well known ports. Both static and dynamic routes are supported by the DMZ Hardened Proxy.

We encourage you to visit our WebSphere Application Server’s Infocenter under What New for more information on these features as well as the many other exciding features we are offering for WebSphere Application Server V7. http://publib.boulder.ibm.com/infocenter/wasinfo/v7r0/index.jsp?topic=/com.ibm.websphere.nd.multiplatform.doc/info/ae/ae/welc_newsecurity.html

Monday, November 3, 2008

EJB 3.0 and Web Services in WebSphere App Server v7.0

@WebService

@Stateless

public class MyEJB3WebServiceBean implements MyCoolService {

...

}

Unfortunately, we weren't able to directly support this scenario via the v6.1 feature packs, since doing so would have required that each feature pack depend on function in the other feature pack -- something that wasn't allowed in the overall definition for the v6.1 feature packs. We published a workaround for this, where you use a "helper class" as the JAX-WS implementation and have that class just forward the incoming requests to the target EJB 3.0 bean. It works just fine, but is nowhere near as nice as just having a single class that's both the endpoint definition and implementation.

The good news is that with WebSphere App Server v7.0, you can directly annotate your EJB 3.0 beans with the @WebService or @WebMethod annotation (just like the code above) and have them directly accessible via a JAX-WS endpoint; no "helper" class required. The Rational Application Developer (RAD) 7.5 tooling also makes it easy to code up your EJB 3.0 beans this way.

The ease-of-use combination of EJB 3.0 and JAX-WS is really, really nice. WebSphere App Server v7.0 makes it easy to implement this powerful combination of function.

Monday, October 27, 2008

New SIP RFCs Supported by WebSphere 7 (Join, Replace and Update)

From abstract of RFC 3911:

This document defines a new header for use with SIP multi-party applications and call control. The Join header is used to logically join an existing SIP dialog with a new SIP dialog. This primitive can be used to enable a variety of features, for example: "Barge-In", answering-machine-style "Message Screening" and "Call Center Monitoring".

From abstract of RFC 3891:

The Replaces header is used to logically replace an existing SIP dialog with a new SIP dialog. This primitive can be used to enable a variety of features, for example: Attended Transfer and Call Pickup.

In terms of RFC 3911, the Join header contains information used to match an existing SIP dialog (call-id, to-tag, and from-tag) to the new dialog being created. From the JSR 116 perspective, the join header can be used to add a new dialog/SIP session to an existing SIP Application session in much the same way that an encoded URI is used. This is achieved by setting the call-id, to-tag and from-tag in the join header of the INVITE to match that of an existing dialog.

In terms of RFC 3991, the Replaces header contains information used to match and replace an existing SIP dialog (call-id, to-tag, and from-tag) to the new dialog being created. From the JSR 116 perspective, the join header can be used to replace an existing SIP session associated with a SIP Application Session with a new dialog/session. This is achieved by setting the call-id, to-tag and from-tag in the replaces header of the INVITE to match that of an existing dialog. Note that it is up to the application to send a BYE on the original dialog, the container will not take care of this for you so that from the JSR perspective there really is not much difference between Join and Replaces.

RFC 3311 support was also added to WAS 7. This RFC defines the use of the Update method. From abstract of RFC 3311:

UPDATE allows a client to update parameters of a session (such as the set of media streams and their codecs) but has no impact on the state of a dialog. In that sense, it is like a re-INVITE, but unlike re-INVITE, it can be sent before the initial INVITE has been completed. This makes it very useful for updating session parameters within early dialogs.

Wednesday, October 22, 2008

Asynchronous Request Dispaching, Part 2

This is part two of my previous blog where I said I would discuss the differences between WebSphere’s Asynchronous Request Dispatcher and the Asynchronous Servlet Proposal from the Java Community Process.

The initial Servlet EG proposal introduced a different model of asynchronous servlet processing through suspend, resume, and complete methods. The basic idea is to allow a servlet to initiate asynchronous operations and re-dispatch to the same servlet once these operations are complete. A suspend tells the container to disable the response so that additional logic in the initial dispatch doesn’t affect the response. Meanwhile, the application programmer uses another thread to do some asynchronous work required by the request. Once that is complete, resume is called which tells the container to schedule a dispatch of the request back through the filters and servlets. Alternatively, complete can be called to simply close the output without a re-dispatch. This has been debated thoroughly, and there are still competing proposals which are arguably less powerful. However, since this is the only publicly discussed proposal, I will use that for comparison.

I will present a few scenarios and show the way they can be solved in ARD and the Servlet EG proposal. This should help you understand the pluses and minuses of using one paradigm over the other.

Example 1: A request needs to print out a table that will be filled in with the results of two slow queries to external resources.

EG Proposal:

1. Original servlet prints out the table up to the point query 1 results are required

2. Suspend the request

3. Kick off query 1

4. Resume/re-dispatch the request after query 1 completion

5. Write results of query 1

6. Write next portion of the table

7. Suspend the request

8. Kick off query 2

9. Resume/re-dispatch the request after query 2 completion

10. Write results of query 2

11. Finish writing the table from the original servlet

ARD:

- Original servlet prints out the table up to the point the query results are required on thread 0

- Do async include on thread 1 to the servlet that does query 1

- Concurrently, thread 0 continues writing the table

- Do async include on thread 2 to the servlet that does query 2

- Concurrently, thread 0 continues writing the table

- Thread 1 returns and flushes content to the client up to query 2

- Thread 2 returns and finishes the response

ARD Advantages:

- ARD can finish sooner because query 1 and 2 are running simultaneously. The EG Proposal kicks them off 1 at a time.

- With the EG Proposal, the application programmer could emulate query 1 and 2 running simultaneously, but they would have to do their own threading.

- With ARD, the original thread can write out the full outline of the page because the container has the smarts to go back in and find the correct position for the response content. The EG Proposal does not.

- The EG Proposal would have more issues with tracking state because the resume goes back through the filter and servlet request handling methods. The filters and servlets would have to make sure they are not duplicating output that was written before the initial or subsequent suspend.

- Order of completion is not important when using ARD.

ARD Disadvantages

- ARD only allows asynchronous operations inside of includes.

- More threads are used.

Example 2: A request requires the results of two slow queries to external resources where state from query 1 is required for query 2 to work.

EG Proposal:

- Enter the original servlet

- Suspend the request and kick off query 1

- Resume the request after query 1 completion

- Re-enter the original servlet

- Suspend the request and kick off query 2 with state from query 1

- Resume the request after query 2 completion

- Re-enter the original servlet and finish

ARD:

- Enter the original servlet using thread pool A.

- Do async include using thread pool B to the servlet that does query 1

- Concurrently, the original servlet processing continues

- Thread from thread pool A waits for results from query 1.

- Do async include using thread pool B to the servlet that does query 2

- Query 2 returns and finishes the response

ARD Disadvantages:

- Because ARD does not have the ability to re-dispatch, we end up blocking the original thread to wait on the results of query 1.

From these examples, I conclude that ARD is best used for executing requests that each have multiple asynchronous operations that are independent of one another. Also, the context propagation that ARD provides is an added benefit. Similar context propagation may be introduced as an additional specification in Java EE 6, but it will not likely be a prerequisite for asynchronous servlets.

There are certain caveats that should be considered in both the ARD and RRD features and they are not one size fits all solutions. Feel free to check out details in the

Tuesday, October 21, 2008

Using WS-Policy to configure WS-ReliableMessaging

Did I mention that WS-ReliableMessaging has just shipped in WebSphere Application Server v7.0? Previously delivered as part of the Web Services Feature Pack for WebSphere Application Server v6.1, the function has been enhanced by conformance to the WS-I Reliable Secure Profile and the impact of WS-Policy on configuring WS-ReliableMessaging.

Using WS-Policy with WS-ReliableMessaging provides improved levels of flexibility on when this quality of service is applied to your Web Service messages. We have introduced a new 'strictlyEnforceWSRM' property that can be applied to the client and the service.

It enables you to chose whether your service and client want to enforce Reliable Messaging or simply support Reliable Messaging. WS-Policy then determines what the common configuration option is between the client and server. For example, if your server policy states that it supports WS-ReliableMessaging , and the client is configured to enforce WS-ReliableMessaging , then WS-ReliableMessaging will be used. However, if the client has no WS-ReliableMessaging configured then the server will not use it.

Finally, unlike Real Snail Mail, we have been working hard on WS-ReliableMessaging performance this release as well. I hope you'll appreciate the results.

Using the Spring Framework with WebSphere Application Server v7.0

AspectJ support

From Spring 2.5 onwards, Spring’s AspectJ support can be utilised. In this example we first define a <tx:advice> that indicates that all methods starting with "get" are PROPAGATION_REQUIRED and all methods starting with "set" are PROPAGATION_REQUIRES_NEW. All other methods use the default transaction settings.

|

|

Another alternative mechanism for declaring transaction settings is to use the Spring annotation-based transaction support. This requires the use of Java 5+, and therefore cannot be used with WebSphere Application Server V6.0.2.x.

First add the following to the spring.xml configuration:

<tx:annotation-driven/>

|

The EJB 3.0 specification defines the Java Persistence API (JPA) as the means for providing portable persistent Java entities. WebSphere Application Server V7 and the WebSphere Application Server V6.1 EJB 3 feature pack both provide implementations of EJB 3 and JPA; it is also possible to use the Apache OpenJPA implementation of JPA with WebSphere Application Server V6.1

Using an Annotation style injection of a JPA EntityManager is possible:

@PersistenceContext |

<!-- bean post-processor for JPA annotations --> |

For JMS message sending or synchronous JMS message receipt, JMSTemplates can be used. This includes the use of Spring’s dynamic destination resolution functionality both via JNDI and true dynamic resolution.

The following example shows the configuration of a resource reference for a ConnectionFactory. This reference is mapped during application deployment to point to a configured, managed ConnectionFactory stored in the application server’s JNDI namespace. The ConnectionFactory is required to perform messaging and should be injected into the Spring JMSTemplate.

<resource-ref> |

<jee:jndi-lookup id="jmsConnectionFactory" jndi-name=" jms/myCF "/> |

JNDI resolution:

jmsTemplate.send("java:comp/env/jms/myQueue", messageCreator);

Dynamic resolution:

jmsTemplate.send("myQueue", messageCreator);

If you have been using the Spring Framework with WebSphere Application Server already, you may have come across this developerWorks article http://www.ibm.com/developerworks/websphere/techjournal/0609_alcott/0609_alcott.html

It has had a facelift recently, and is updated with new content including further configuration details of using WebSphere Application Server with the Spring Framework.

Friday, October 17, 2008

Asynchronous Request Dispatching, Part 1

This is my first blog so I should say a little about myself before I get into things. My name is Maxim, or Max for short, and I’ve been working on the WebContainer since 2003 in various capacities. In Version 7 of WebSphere Application Server (WAS), I acted as the WebContainer architect and the Servlet Expert Group (EG) member representing IBM.

If you have been following the latest in Servlet technology, you would know that the expert group has finally decided to try to standardize asynchronous servlet support for Servlet 3.0. This came as somewhat of a surprise because I had already been working with my colleague Erinn Koonce on a related feature for WAS version 7 called the Asynchronous Request Dispatcher (ARD). Naturally, as options are discussed in the Servlet EG, I compare them to ARD and think about why you’d want to adopt one over the other.

ARD came about as a result of another feature we put in WAS Version 6.1 called the Remote Request Dispatcher (RRD). RRD was a requirement from WebSphere Portal to help them support Web Services for Remote Portlets (WSRP). The problem that WSRP tries to solve is that in some Portal environments one problem web module can bring down the entire application server and its applications. The natural response is to just add more resources to the deployment. However, this may be overkill for the web modules that are well behaved. Since many applications consist of multiple web modules that interact with one another through request dispatcher includes, each web module had to reside on the same server.

RRD provided the mechanism to separate up these applications into other servers or clusters without having to rewrite the interaction logic between web modules. However, there was a major drawback resulting from the cost of packaging and sending of the metadata across the network that each remote request dispatch required. To help alleviate this, Portal wanted a mechanism to execute these remote request dispatches asynchronously. Thus, ARD was born.

The Asynchronous Request Dispatcher does the following:

- Allows request dispatch includes to execute asynchronously and concurrently

- Maintains proper ordering of response output

- Propagates request and thread context

- Allows decoupling of the dispatching of the request with the position where the content should be inserted

- Allows for client side aggregation of results

In my next blog, I will discuss the differences between the two proposals.

Monday, October 13, 2008

New WAS v7.0 Web Services Functionality

The work (from a technology perspective) focused on 2 specific areas:

- JCP-based programming model updates

- Continuation of filling out the Web Services Roadmap

Extending beyond what's in just the JCP specification, the combination of EJB 3.0 beans and JAX-WS annotations also brings support for SOAP/JMS-based beans to JAX-WS-based services. This makes it consistent for those developers looking to use existing reliable transports as a way to send/receive their web services requests. As part of that support, we are tracking the emerging SOAP/JMS standard being developed at W3C.

With respect to filling out the Web Services roadmap, WebSphere v7.0 upgrades support for numerous OASIS and W3C specifications to their official standardized levels (as well as providing support for their pre-OASIS levels for versions previously introduced within WebSphere). OASIS WS-AtomicTransactions and WS-BusinessActivity were both upgraded to their 1.1 levels (so WebSphere supports both 1.0 and 1.1 levels now). The OASIS WS-Trust and WS-SecureConversation specifications have also been upgraded to their OASIS 1.3 levels as well as support for OASIS WS-SecurityPolicy 1.2 being introduced. The OASIS Kerberos Token Profile 1.1 is also now supported in v7.0 providing support for single signon with Keberos tokens.

With the addition of WS-SecurityPolicy also means that we've introduced support for W3C's WS-Policy 1.5 specification. As such, WebSphere now supports the ability to expose WS-Policy assertions for services exposing WSDL endpoints supporting the qualities of service attached at that endpoint (including Security, ReliableMessaging, Addressing, and Transactions). That information is available via the typical ?wsdl exposed for the service as well as a WS-MetadataExchange request to the service endpoint too.

To continue focusing on improving the ease-of-use experience for WS-Policy, WebSphere has also exposed the ability on the client side to configure itself based on the policy assertions exposed in the published service's WSDL file. This makes it easy to have the client configure itself (or calculate an effective policy based on the clients capabilities).

In addition to the standards upgrades, v7.0 continues to focus on other enhancements to the existing functionality (both functional and non-functional) which continues to enhance the maturity and ease-of-use to the development and adminstration of web services. For example, WS-Notification services now can take advantage of PolicySets. PolicySets themselves have been enhanced to support naming the configurations as well as the bindings. These can now be imported and exported easily to allow these pre-configured systems to be moved from one topology to another. For example, moving from a development environment to a test environment to a production environment.

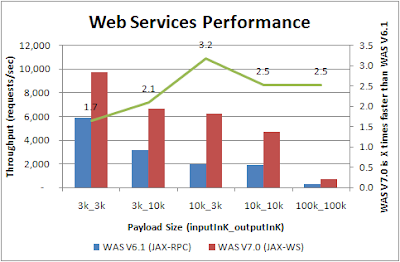

Andrew Spyker had already talked about some of the dramatic performance improvements introduced in v7.0 for web services.

I guess to make a long story short, there's lots of good new stuff in the web services space. I hope that you find the links provided above as a good starting point to quickly get pointers to more information on the topics discussed.

New WAS v7.0 SIP Function

First, we added support for the following RFCs:

- 3263 - Locating SIP Servers

- 3311 - The SIP Update method

- 3325 - Private Extensions to the Session Initiation Protocol (SIP) for Asserted Identity within Trusted Networks

- 3891 - The SIP Replaces Header

- 3911 - The SIP Join Header

- 4475 - SIP Torture Test Messages

In WAS v6.1, we had supported RFC 3263 aside from section 5 but completed section 5 in this release. The final RFC there, the SIP torture test messages, refers more to a testing effort than function. This torture testing combined with our already rigorous telco carrier grade testing will help WAS v7.0 be one of the most stabile SIP application servers on the market.

Beyond the additional standards support, our SIP Proxy which fronts the application server had several enhancements. It can now support DMZ deployments as discussed here, clustering of the proxy servers when behind the firewall, and an improved load balancing to further reduce call loss in some error conditions associated with retransmissions. Finally, also in our converged Servlet Container, users will notice that the digest authentication support has been made much better.

Friday, October 10, 2008

What's NEW in WebSphere v7

Here are links to the developerWorks PodCast site and a little teaser posted as a podcast announcing the chat.

Tuesday, October 7, 2008

DMZ-hardened WebSphere Proxy

In WAS v7, customers have access to a DMZ-hardened version of the proxy server. This server ships on a separate installer that contains a subset of the full WAS ND installation. It contains a few notable differences from the ND install that make it suitable for installation in a DMZ:

- No JDK: The secure proxy utilizes only the JRE, so no compiler is available in the DMZ.

- Fewer Listening Ports: The security proxy can be configured to have as few as two listening ports (HTTP and HTTPS).

- Slimmer set of jars: Since the proxy does not require certain functionality (e.g. web container, EJB container, web services, etc.), jars containing this function are omitted from the install for security and memory footprint purposes.

- Slimmer set of active services: The secure proxy utilizes runtime provisioning (new in v7) to start only the required services. Services like JNDI, application install, and ORB are not started.

The DMZ Secure Proxy Server is a nice upgrade over the IHS plug-in and Edge Proxy in terms of feature set, scalability, performance, and WAS integration and I am very excited to see customers begin reaping the benefits of deploying it.

Friday, October 3, 2008

Join me in Harrisburg, PA on the 15th to talk about BPM and SOA

Thursday, October 2, 2008

v7 UPDI "Update Installer" and v7 IF "Installation Factory" (a really nifty tool)

Since UPDI is the only way to update WebSphere servers, every customer already knows about it (if you really don't, please immediately see the WebSphere v7 InfoCenter UPDI information).

Many (most?) customers have still not discovered the nifty "Installation Factory" (IF) tool. In a nutshell, IF is a way for a customer to merge an initial WebSphere 6.1.0.0 (or 7.0.0.0) plus a FixPack (say 6.1.0.17) plus multiple interim fixes (iFixes) into a single, smaller, install called a "Customized Install Package" (CIP). The resulting CIP can be used to do a "scratch" install of that combination, or it can do a "slip" upgrade of an existing installation. IF is even capable of merging several product CIPs into a combined single "Integrated Install Package" (IIP). If you are not familiar with IF the I suggest you browse the WebSphere v7 InfoCenter IF information, and also read the DeveloperWorks article "Using Custom Installation Packages to install and update WebSphere Application Server in large development environments". Even if you are still on v6.1, you can download and use the IF-v7 to great advantage. Give the preceeding IF-v6.1 article (but still fully applicable to v7) a read and I guarantee you will be pleasantly surprised. The most common comment I hear from customers is "why didn't someone tell me about IF" - so, you have now been told. :-)

Wednesday, October 1, 2008

64-bit Performance Thoughput/Memory Improvements in WAS V7.0

Tuesday, September 30, 2008

EJB 3.0 Performance Improvements in WAS V7.0 (up 23%)

Monday, September 29, 2008

What's so great about WS-Policy?

So, exactly what does WS-Policy give us? Conceptually, it's pretty simple: a standard XML format for expressing requirements or capabilities (policies) of a system. To be more precise, it just provides a framework for expressing policies. This is an excellent model for flexibility and extensibility because you can add policies of any 'flavour' within this framework.

A policy flavour or 'domain' might be a proprietary policy - for example, a policy relating to a logging. Alternatively, you can express policies relating to pre-canned standard domains such as WS-SecurityPolicy. So this opens a whole new opportunity: so long as communicating systems understand WS-Policy and the same policy domains, they can exchange information about requirements and capabilities in a standard format. Here are some example uses of this:

- A client could configure itself based on a server's configuration (even if the client and server use different proprietary formats for representing their configurations internally)

- In a large, heterogeneous environment, a standard policy configuration for many machines could be held in a central repository

- A service endpoint could advertise its requirements to all its clients in a non-proprietary way

Finally, WS-Policy provides an algorithm (called intersection) for combining 2 policies together to find an acceptable policy for all parties. So if a client has requirements but also needs to conform to the requirements of the service, you can take the two policies, perform intersection and out pops a policy acceptable to both the client and service.

More about ws-policy support in WAS 7 to follow...

Web Service Performance Improvements in WAS V7.0 (up to 3x)

Thursday, September 25, 2008

Need help with WebSphere scripting..?

WebSphere scripting is a detailed subject with lots of capability. I have had administrators often ask me - "I am new to WebSphere .. or I used to use console UI but now we want to automate all of our WebSphere administration. Where do I begin? .. or I know my script command works but how can I confirm that it covers all the scenarios or topologies?". The jython editor in Rational Application Developer goes a long way in boosting productivity of WebSphere script developers. The script library that we ship with WebSphere 7.0 is another good source for administrators trying to automate WebSphere administration tasks. The script library contains a number of good scripts for common administrative functions such as -

- Application management: install, uninstall, update, start, stop

- Server/Cluster management: create, delete, update, start, stop server or cluster. Need a quick example to set a server JVM property or trace specification? You will find it in there.

- Resource Manipulation: create/manipulate resources for JDBC, J2C, JMS and so on

- Security configuration: manipulate authorization for users and groups

- Ajay

To run WebSphere v7, first you have to install ...

Linux/AIX/HPUX/Solaris non-root installs are fully supported, which sounds fairly straight forward but had huge internal impacts. As well, installation performance has been increased such that the significantly enhanced v7 servers still install in the same time as the previous v6.1 servers. Keeping install performance (time) the same while laying down several hundred more megabytes was a challenge, but the team worked to find many places where a few seconds here-and-there could be shaved, and the net result is almost identical time for v7 versus v6.1 installs.

So, the "Install" should have "no surprises" - the real changes are WebSphere v7 itself. As soon as you can get it, give it a spin ...

Wednesday, September 24, 2008

Have it your way

WebSphere Application Server (WAS) has been on a path for some time now to enable custom solutions. Starting way back with WAS 6.0 (2004), astute system administrators may have noticed that we have started packaging the WebSphere runtime in OSGi bundles located in the "plugins" directory. What OSGi does is allow for some separation of concerns and decoupled code selection, as well dynamic hook points for integration. This, in turn, allows WAS to be configured in a variety of ways that were difficult or impossible in the past.

Take, for example, the use of open source. When used in an application, it can help the application developer achieve their goals in a rapid way by utilizing the efforts of the open source community rather than reinventing similar function. The same could also be said for the server. If you are wondering about how much open source gets used in creating WAS, try taking a look at the

Another area where OSGi has helped is in server footprint. OSGi allows for dynamic code use and enables the possibility of load on first use. What this means is that you now have the option of configuring the server to use only as much of the runtime as is necessary. OSGi alone can't do this, so a fair amount of work has gone into getting the WAS version 7 runtime enabled for dynamic provisioning, some of which can be automated, and some of which must be manually configured. One of the most frequent questions I get around this is how much will it reduce the server footprint. As with all performance related questions, it will depend on the scenario. I will say that we've only touched the tip the iceberg for possibilities here and I think we've got a good head start on the competition. For more details, stay tuned to hear from Andrew Spyker as he posts updates on WAS performance.

Tuesday, September 23, 2008

WebSphere Application Server V7.0 Messaging

To help you discover the new features contained in V7.0 we'll be blogging them at WebSphere and Messaging over the coming weeks.

Here's a taster of some of the new features of WAS V7.0 that we'll cover:

- Consumability improvements

- New console wizards to make configuration easier

- Improved facilities for monitoring and control

- Improved integration with WebSphere MQ

- Using WMQ directly from WAS via the new JCA 1.5 Resource Adapter

- Using WMQ via the SIBus - increased platform coverage and additional facilities

- Clustering improvements

- Better workload balancing

- New options for message routing and visibility

- Response routing within a cluster

- Additional options for MDB activations

- Security changes

- Connectivity from client environments and from servers outside the cell

- Performance improvements

Monday, September 22, 2008

WebSphere and Java Persistence

In WebSphere v7, the WebSphere JPA solution provides the following features beyond the base JPA specification (those marked with an asterisk were provided in the Feature Pack for EJB 3.0 as well):

- Performance Improvements

- DB2 pureQuery (Static SQL) integration

- ObjectGrid Cache plugin

- SQL Statement Batching *

- DB2 Select Statement Optimizations *

- Consumability Improvements

- Access Intent support

- XML Column Mapping *

- DB2Diagnosable exception processing *

- Enhanced tracing using AspectJ *

- Database generated version IDs *

- Command scripting (.bat/.sh) *

- Globalization

- NLS Message Files *

More information on these features can be found in the InfoCenter. Due to the numerous requests we receive concerning the WebSphere JPA solution, we have also decided to create a blog devoted to WebSphere and Java Persistence. Please visit and share your comments as we continue to populate this avenue for sharing information.

Friday, September 19, 2008

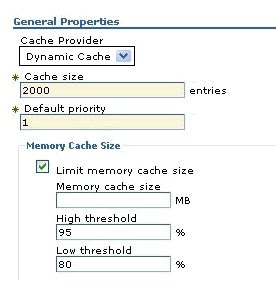

New Dynacache Features in WebSphere 7 - Part 1

I am excited about the new Dynacache heap management feature in WAS 7. The primary motivation for introducing this feature is for serviceability. Many times customers trip up on themselves by caching too much stuff. I have lost count of the number of critical situations where I have been hauled from the bed, by some customer critical situation where the server was down due to an OOM error. It is extremely important to size the cache well. Unfortunately this is not an easy thing to do and pre-production stress testing in some cases does not simulate real world traffic which leads to an underutilized cache and thus false confidence in the capacity utilization of the cache.

This is not an easy problem to solve. There is no sizeof operator in Java which will tell us the size of an object in the JVM heap. Therefore we have to use all sorts of smarts, trickery and some help from the application developer to determine the total amount of memory on the JVM heap taken up by the cache. All earlier techniques to determine cache size rely on serializing the cached objects and metadata because that is the only way to accurately determine the size of the objects. In WebSphere 7 we have taken a much light weight approach which does not rely on serialization to determine cache heap size.

Most application servers allow cache size to be controlled by no.of entries. We are taking cache size management to the next level in WebSphere Application Server 7.

What exactly does Dynacache provide ?

WAS Dynacache component will provide an ability to constrain the cache in terms of the JVM heap. In addition to specifying the cache size in MB, Dynacache will also allow customers to set a high water mark and a low water mark for the cache heap consumed. Once cache heap memory reaches the high water mark, dynacache will either discard or evict the least recently used items to disk, till the cache is brought down to the low water mark. This functionality of limiting the cache in terms of the JVM heap will be available if the objects put into the cache that implement the com.ibm.websphere.cache.Sizeable interface. When servlet caching is enabled, all the cached JSP and servlet responses will be Sizeable. This interface will have one method which will return the size of the Object in bytes put into the cache. Dynacache will use the Sizeable interface to estimate the heap size of the cache. This feature will be OFF by default. A customer will have to explicitly enable and set cache limits and watermarks.

How to enable this feature ?

On the Dynacache service panel, WebSphere exposes the Dynamic Cache object cache service and Dynamic Cache servlet cache service. The Dynamic object cache service is always started at server start up. The Dynamic servlet cache service is started when the servlet caching is enabled in WebContainer panel. There is now a checkbox for Memory Cache Size which will control the memory cache size with high/low threshold. In addition to specifying the size the customer can also specify a range for the heap by setting threshold limits. The Servlet and Object Cache instance under the Resources -> Cache Instances have also include the Memory Cache Size control feature.

WebSphere Application Server Version 7.0 Performance Highlights

- General Java EE

- Web Services including JAX-WS and WS-* standards support

- Persistence including EJB3 and JPA

- Startup Time and Memory Footprint

- Security including Java SE, Admininstration, Java EE, and new features

- Java SE Performance

- Hardware exploitation (64-bit, Multicore, Virtualization)

- Support for SIP based communication in our convergence sevlet container

Wednesday, September 17, 2008

Documentation Approach about Web Services for WebSphere

Lastly, another approach is the usage of Redbooks. Members of our development organization participated with other IBM folks to help produce a Feature Pack for Web Services Redbook. These are all meant to be ways to compliment our existing Information Center and provide specific useful scenarios in a more end-to-end fashion to help our developers get on board.

I hope you like the approach and would be interested in hearing your feedback.

Thursday, August 14, 2008

In case you thought the SCA rock stopped rolling...

Beta details here. Of course, our code is based on the Tuscany open source project which I've blogged about before.

I want to thank all of my development and beta team members for all of their hard work.

Friday, July 11, 2008

The TPC is working on a SOA Benchmark proposal submitted by IBM

Thursday, June 26, 2008

eWeek: "IBM WebSphere at 10"

Excerpt:

"IBM Senior Vice President and Software Group General Manager Steve Mills met with three of his top lieutenants in his office back in 1997 to discuss the "Webification" of IBM's enterprise tools. Out of that discussion the IBM WebSphere Application Server was born."I had just joined IBM in 1997 and was working on DB2 for OS/390. While I was trying to figure out what this "mainframe" thing was why anyone would want to manage it from OS/2, smart folks in Raleigh were creating the future. Well done, gents.